<p>friends don’t let friends use spicy autocarrot to generate <a href="/tags/passwords/" rel="tag">#passwords</a>… 💁♀️ </p><p>Your AI-generated password isn't random, it just looks that way </p><p>“AI security company Irregular looked at Claude, ChatGPT, and Gemini, and found all three <a href="/tags/genai/" rel="tag">#GenAI</a> tools put forward seemingly strong passwords that were, in fact, easily guessable.” <br>… <br>“Irregular found that all three AI <a href="/tags/chatbots/" rel="tag">#chatbots</a> produced passwords with common patterns, and if hackers understood them, they could use that knowledge to inform their brute-force strategies.” <br>… <br>“Knowing the patterns also reveals how many times <a href="/tags/llms/" rel="tag">#LLMs</a> are used to create passwords in open source projects. The researchers showed that by searching common character sequences across <a href="/tags/github/" rel="tag">#GitHub</a> and the wider web, queries return test code, setup instructions, technical documentation, and more.” </p><p><a href="https://www.theregister.com/2026/02/18/generating_passwords_with_llms/" rel="nofollow" class="ellipsis" title="www.theregister.com/2026/02/18/generating_passwords_with_llms/"><span class="invisible">https://</span><span class="ellipsis">www.theregister.com/2026/02/18</span><span class="invisible">/generating_passwords_with_llms/</span></a></p>

genai

<p><a href="/tags/psa/" rel="tag">#PSA</a> <a href="/tags/authors/" rel="tag">#Authors</a> <a href="/tags/writing/" rel="tag">#Writing</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/meta/" rel="tag">#Meta</a> <a href="/tags/anthropic/" rel="tag">#Anthropic</a> <a href="/tags/anthropicclassaction/" rel="tag">#AnthropicClassAction</a></p><p>Deadline to join the class action against Meta/Anthropic: Friday August 15, 2025.</p><p>"Submitting your information here does not make you a member of the Class. But, if you are a member of the class, submitting your information will help us direct formal notice of the class action at the appropriate time."</p><p><a href="https://www.lieffcabraser.com/anthropic-author-contact/" rel="nofollow" class="ellipsis" title="www.lieffcabraser.com/anthropic-author-contact/"><span class="invisible">https://</span><span class="ellipsis">www.lieffcabraser.com/anthropi</span><span class="invisible">c-author-contact/</span></a></p><p>Gift link to The Atlantic database search tool:<br><a href="https://www.theatlantic.com/technology/archive/2025/03/search-libgen-data-set/682094/?gift=uvbqc-y7RgcozRTSY7kANHrt8LyDF9nPyN5h5Dmp4rE" rel="nofollow" class="ellipsis" title="www.theatlantic.com/technology/archive/2025/03/search-libgen-data-set/682094/?gift=uvbqc-y7RgcozRTSY7kANHrt8LyDF9nPyN5h5Dmp4rE"><span class="invisible">https://</span><span class="ellipsis">www.theatlantic.com/technology</span><span class="invisible">/archive/2025/03/search-libgen-data-set/682094/?gift=uvbqc-y7RgcozRTSY7kANHrt8LyDF9nPyN5h5Dmp4rE</span></a></p><p>Please share widely!</p>

Edited 235d ago

<p>This misguided trend has resulted, in our opinion, in an unfortunate state of affairs: an insistence on building NLP systems using ‘large language models’ (LLM) that require massive computing power in a futile attempt at trying to approximate the infinite object we call natural language by trying to memorize massive amounts of data. In our opinion this pseudo-scientific method is not only a waste of time and resources, but it is corrupting a generation of young scientists by luring them into thinking that language is just data – a path that will only lead to disappointments and, worse yet, to hampering any real progress in natural language understanding (NLU). Instead, we argue that it is time to re-think our approach to NLU work since we are convinced that the ‘big data’ approach to NLU is not only psychologically, cognitively, and even computationally implausible, but, and as we will show here, this blind data-driven approach to NLU is also theoretically and technically flawed.<br></p>From Machine Learning Won't Solve Natural Language Understanding, <a href="https://thegradient.pub/machine-learning-wont-solve-the-natural-language-understanding-challenge/" rel="nofollow" class="ellipsis" title="thegradient.pub/machine-learning-wont-solve-the-natural-language-understanding-challenge/"><span class="invisible">https://</span><span class="ellipsis">thegradient.pub/machine-learni</span><span class="invisible">ng-wont-solve-the-natural-language-understanding-challenge/</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/nlp/" rel="tag">#NLP</a> <a href="/tags/nlu/" rel="tag">#NLU</a> <a href="/tags/gpt/" rel="tag">#GPT</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a> <a href="/tags/claude/" rel="tag">#Claude</a> <a href="/tags/gemini/" rel="tag">#Gemini</a> <a href="/tags/llama/" rel="tag">#LLAMA</a><br>

<p>Page Against the Machine: On the Poetics of AI Refusal</p><p>Pip Thornton documents and debates some of the outputs of ‘Writing the Wrongs of AI’, a project which explored creative ways to demonstrate the power that human words, poetics and writing might have in resisting the influence of artificial intelligence in the literary sphere and beyond.</p><p><a href="https://www.scottishpoetrylibrary.org.uk/2025/09/page-against-the-machine-on-the-poetics-of-ai-refusal/" rel="nofollow" class="ellipsis" title="www.scottishpoetrylibrary.org.uk/2025/09/page-against-the-machine-on-the-poetics-of-ai-refusal/"><span class="invisible">https://</span><span class="ellipsis">www.scottishpoetrylibrary.org.</span><span class="invisible">uk/2025/09/page-against-the-machine-on-the-poetics-of-ai-refusal/</span></a></p><p><a href="/tags/scottish/" rel="tag">#Scottish</a> <a href="/tags/literature/" rel="tag">#literature</a> <a href="/tags/poetry/" rel="tag">#poetry</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#genAI</a> <a href="/tags/generativeai/" rel="tag">#generativeAI</a></p>

Re: LB: time to move off Chrome.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/web/" rel="tag">#web</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/google/" rel="tag">#Google</a> <a href="/tags/chrome/" rel="tag">#Chrome</a><br>

<p>The true power of <a href="/tags/genai/" rel="tag">#genAI</a> is not technological, but rhetorical: almost all conversations about it are about what executives are saying it will do "one day" or "soon" rather than what we actually see (and of course no mention of business model which doesn't exist).</p><p>We are told to simultaneously believe AI is so "early days" as to excuse any lack of real usefulness, and that it is so established - even "too big to fail" - that we are not permitted to imagine a future without it.</p>

If current-generation LLM-based chatbots can drive people to commit crimes or even take their own lives, what do you suppose Neuralink would do to people?<br><br>The first thing I said to the person who suggested to me that human brains might be directly hooked to a computer, whenever that was, was "everyone will go insane immediately". I still believe that, but now we're beginning to see evidence.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/neuralink/" rel="tag">#Neuralink</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/hci/" rel="tag">#HCI</a> <a href="/tags/brainimplants/" rel="tag">#BrainImplants</a><br>

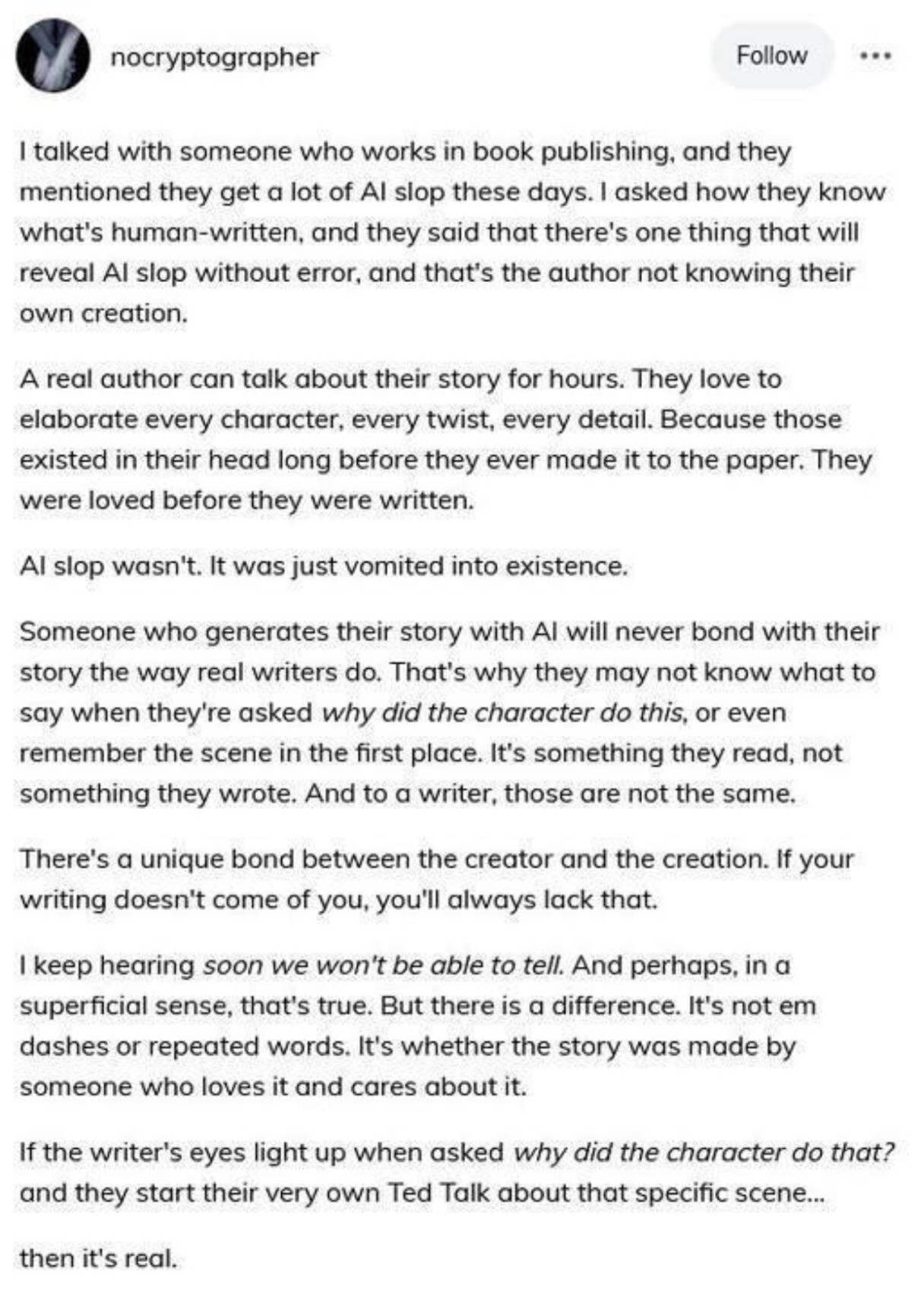

<p>Writing vs AI slop:</p><p>"AI slop is... vomited into existence...something they read, not something the wrote. And to a writer those are not the same."</p><p><a href="/tags/writing/" rel="tag">#Writing</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <span class="h-card"><a href="https://fedigroups.social/@bookstodon" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>bookstodon</span></a></span></p>

<p>"The Wikimedia Foundation, the nonprofit organization that hosts Wikipedia, says that it’s seeing a significant decline in human traffic to the online encyclopedia because more people are getting the information that’s on Wikipedia via generative AI chatbots that were trained on its articles and search engines that summarize them without actually clicking through to the site."</p><p>Generative AI keeps on ruining everything. </p><p><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#genai</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/wikipedia/" rel="tag">#wikipedia</a> <a href="/tags/internet/" rel="tag">#internet</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/news/" rel="tag">#news</a></p><p><a href="https://www.404media.co/wikipedia-says-ai-is-causing-a-dangerous-decline-in-human-visitors/" rel="nofollow" class="ellipsis" title="www.404media.co/wikipedia-says-ai-is-causing-a-dangerous-decline-in-human-visitors/"><span class="invisible">https://</span><span class="ellipsis">www.404media.co/wikipedia-says</span><span class="invisible">-ai-is-causing-a-dangerous-decline-in-human-visitors/</span></a></p>

<p>The AI industry wants us to believe AI superintelligence is the real threat from generative AI.</p><p>But that narrative was crafted to distract from the many ways genAI is being used to tear our societies apart, as we saw this week when a deepfake video rocked the Irish election. It must be reined in.</p><p><a href="https://disconnect.blog/generative-ai-is-a-societal-disaster/" rel="nofollow" class="ellipsis" title="disconnect.blog/generative-ai-is-a-societal-disaster/"><span class="invisible">https://</span><span class="ellipsis">disconnect.blog/generative-ai-</span><span class="invisible">is-a-societal-disaster/</span></a></p><p><a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/genai/" rel="tag">#genai</a> <a href="/tags/generativeai/" rel="tag">#generativeai</a> <a href="/tags/deepfake/" rel="tag">#deepfake</a> <a href="/tags/politics/" rel="tag">#politics</a> <a href="/tags/ireland/" rel="tag">#ireland</a></p>

<p>“Universities that encourage students to use <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a>? I’m stunned to hear something like that.”</p><p>Luc Steels – the “godfather of <a href="/tags/ai/" rel="tag">#AI</a> research in Belgium” –is one of the Belgian signatories of a recent open letter to “stop the uncritical adoption of AI technologies in academia”, initiated by <span class="h-card"><a href="https://scholar.social/@olivia" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>olivia</span></a></span> and <span class="h-card"><a href="https://scholar.social/@Iris" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>Iris</span></a></span>. </p><p>I spoke with Steels, computational linguist Katrien Beuls, and computer scientist <span class="h-card"><a href="https://scholar.social/@wim_v12e" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>wim_v12e</span></a></span>, and others, about their resistance against <a href="/tags/genai/" rel="tag">#genAI</a> in academia.</p><p><a href="https://apache.be/2025/10/24/belgian-ai-scientists-resist-use-ai-academia?cdlnk=a04yMGFEUks1WEI2Y0lFT2lUR0x3MHBKNjZuSVY0WUxJN2JwNENOamNsM2ZzZWlrdnlEQmpUU2dqV3ZiKzhBaE5UdXh2MTVYSjYycmMwSG0yOVhpdzBEb2k4bVFOS0xHWEE9PTo6NTFkZTFjYWZkMjUwOGNmNDA2ZjA2NDVkOTcwNzA4Zjk%3D" rel="nofollow" class="ellipsis" title="apache.be/2025/10/24/belgian-ai-scientists-resist-use-ai-academia?cdlnk=a04yMGFEUks1WEI2Y0lFT2lUR0x3MHBKNjZuSVY0WUxJN2JwNENOamNsM2ZzZWlrdnlEQmpUU2dqV3ZiKzhBaE5UdXh2MTVYSjYycmMwSG0yOVhpdzBEb2k4bVFOS0xHWEE9PTo6NTFkZTFjYWZkMjUwOGNmNDA2ZjA2NDVkOTcwNzA4Zjk%3D"><span class="invisible">https://</span><span class="ellipsis">apache.be/2025/10/24/belgian-a</span><span class="invisible">i-scientists-resist-use-ai-academia?cdlnk=a04yMGFEUks1WEI2Y0lFT2lUR0x3MHBKNjZuSVY0WUxJN2JwNENOamNsM2ZzZWlrdnlEQmpUU2dqV3ZiKzhBaE5UdXh2MTVYSjYycmMwSG0yOVhpdzBEb2k4bVFOS0xHWEE9PTo6NTFkZTFjYWZkMjUwOGNmNDA2ZjA2NDVkOTcwNzA4Zjk%3D</span></a></p>

Edited 165d ago

<p>JesusGPT…</p><p>Former CEO of Intel Building Special AI to Bring About Second Coming of Christ</p><p><a href="https://futurism.com/artificial-intelligence/former-ceo-intel-ai-christ" rel="nofollow" class="ellipsis" title="futurism.com/artificial-intelligence/former-ceo-intel-ai-christ"><span class="invisible">https://</span><span class="ellipsis">futurism.com/artificial-intell</span><span class="invisible">igence/former-ceo-intel-ai-christ</span></a></p><p><a href="/tags/religion/" rel="tag">#religion</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/artificialintelligence/" rel="tag">#ArtificialIntelligence</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/machinelearning/" rel="tag">#MachineLearning</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/technology/" rel="tag">#technology</a> <a href="/tags/bigtech/" rel="tag">#BigTech</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#generativeAI</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/meta/" rel="tag">#Meta</a> <a href="/tags/google/" rel="tag">#Google</a> <a href="/tags/openai/" rel="tag">#OpenAI</a> <a href="/tags/chatgpt/" rel="tag">#ChatGPT</a></p>

I came across a post on LinkedIn about evolutionary computation, and opted to post this in response:<br><p>I never stopped using evolutionary computation. I'm even weirder and use coevolutionary algorithms. Unlike EC, the latter have a bad reputation as being difficult to apply, but if you know what you're doing (e.g. by reading my publications 😉) they're quite powerful in certain application areas. I've successfully applied them to designing resilient physical systems, discovering novel game-playing strategies, and driving online tutoring systems, among other areas. They can inform more conventional multi-objective optimization.<br><br>Many challenging problems are not easily "vectorized" or "numericized", but might have straightforward representations in discrete data structures. Combinatorial optimization problems can fall under this umbrella. Techniques that work directly with those representations can be orders of magnitude faster/smaller/cheaper than techniques requiring another layer of representation (natural language for LLMs, vectors of real values for neural networks). Sure, given enough time and resources clever people can work out a good numerical re-representation that allows a deep neural network to solve a problem, or prompt engineer an LLM. But why whack at your problem with a hammer when you have a precision instrument?<br></p>I started to put up notes about (my way of conceiving) coevolutionary algorithms on my web site, <a href="https://bucci.onl/notes/coevolutionary-algorithms" rel="nofollow">here</a>. I stopped because it's a ton of work and nobody reads these as far as I can tell. Sound off if you read anything there!<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/evolutionarycomputation/" rel="tag">#EvolutionaryComputation</a> <a href="/tags/geneticalgorithms/" rel="tag">#GeneticAlgorithms</a> <a href="/tags/geneticprogramming/" rel="tag">#GeneticProgramming</a> <a href="/tags/evolutionaryalgorithms/" rel="tag">#EvolutionaryAlgorithms</a> <a href="/tags/coevolutionaryalgorithms/" rel="tag">#CoevolutionaryAlgorithms</a> <a href="/tags/cooptimization/" rel="tag">#Cooptimization</a> <a href="/tags/combinatorialoptimization/" rel="tag">#CombinatorialOptimization</a> <a href="/tags/optimization/" rel="tag">#optimization</a><br>

Edited 91d ago

I don't have anything special to say about this other than compare and contrast:<br><br>- <a href="https://en.wikipedia.org/wiki/Chatbot_psychosis" rel="nofollow">AI psychosis</a>: "Journalistic accounts describe individuals who have developed strong beliefs that chatbots are sentient, are channeling spirits, or are revealing conspiracies, sometimes leading to personal crises or criminal acts."<br>- <a href="https://www.nature.com/articles/d41586-025-03020-9" rel="nofollow">Can AI chatbots trigger psychosis? What the science says</a>: "Chatbots can reinforce delusional beliefs, and, in rare cases, users have experienced psychotic episodes."<br><br>with<br><br>- <a href="https://pmc.ncbi.nlm.nih.gov/articles/PMC9938787/" rel="nofollow">New-Onset Hyperreligiosity, Demonic Hallucinations, and Apocalyptic Delusions following COVID-19 Infection</a>: "There has been an increasing body of literature suggesting a correlation between COVID-19 infection and psychosis."<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/aipsychosis/" rel="tag">#AIPsychosis</a> <a href="/tags/chatbotpsychosis/" rel="tag">#ChatbotPsychosis</a> <a href="/tags/mentalhealth/" rel="tag">#MentalHealth</a> <a href="/tags/covid/" rel="tag">#COVID</a> <a href="/tags/covid19/" rel="tag">#COVID19</a> <a href="/tags/covidisnotover/" rel="tag">#COVIDIsNotOver</a><br>

Edited 159d ago

Regarding the last boost: I find LibreOffice to be quite good, it's offline, it's available for Windows if you use that, and it's free. <a href="https://www.libreoffice.org" rel="nofollow"><span class="invisible">https://</span>www.libreoffice.org</a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/noai/" rel="tag">#NoAI</a> <a href="/tags/microsoft/" rel="tag">#Microsoft</a> <a href="/tags/copilot/" rel="tag">#Copilot</a> <a href="/tags/microsoftoffice/" rel="tag">#MicrosoftOffice</a> <a href="/tags/libreoffice/" rel="tag">#LibreOffice</a> <a href="/tags/foss/" rel="tag">#foss</a><br>

The other day I had the intrusive thought<br><p>AI is intellectual Viagra<br></p>and it hasn't left me so I am exorcising it here. I'm sorry in advance for any pain this might cause.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/diffusionmodels/" rel="tag">#DiffusionModels</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/coding/" rel="tag">#coding</a> <a href="/tags/software/" rel="tag">#software</a> <a href="/tags/softwaredevelopment/" rel="tag">#SoftwareDevelopment</a> <a href="/tags/writing/" rel="tag">#writing</a> <a href="/tags/art/" rel="tag">#art</a> <a href="/tags/visualart/" rel="tag">#VisualArt</a><br>

This is a long time coming. I've been posting about the decline of arXiv's CS category for a long time now, and even had a few conversations with someone I know who works there about it. Personally, I think the slop started in 2018--prior to generative AI slop--when the CS category at arXiv began the unsustainable exponential growth in submissions that has continued till today. An increasing number of what amounted to corporate whitepapers and other marketing materials were being posted on arXiv to give them the appearance of scientific credibility. There was a fairly clear arXiv-to-Nature pipeline. Citation counts were pumped as some of the scientometric services count arXiv "articles" as citations, and some researchers adopted the bad scholarly habit of citing arXiv preprints instead of the final publication. It was and still is a mess. My understanding is that arXiv was meant as a place for people to put high-quality but pre-publication articles, but at least in the CS category it's drifted quite far from that.<br><br>I gather they've finally taken this measure because of the preponderance AI-generated slop, but with any luck these other issues will improve too. The arXiv press release states “Review/survey articles or position papers submitted to arXiv without this documentation will be likely to be rejected and not appear on arXiv” so it does sound like they are acknowledging the other problems and intend to enforce their rules more strictly in the future.<br><br>"arXiv says it will no longer accept Computer Science papers that are still under review due to the wave of AI-generated ones it has received."<br>From <a href="https://infosec.exchange/users/josephcox/statuses/115486903712973154" rel="nofollow" class="ellipsis" title="infosec.exchange/users/josephcox/statuses/115486903712973154"><span class="invisible">https://</span><span class="ellipsis">infosec.exchange/users/josephc</span><span class="invisible">ox/statuses/115486903712973154</span></a><br><br><a href="/tags/arxiv/" rel="tag">#arXiv</a> <a href="/tags/preprint/" rel="tag">#preprint</a> <a href="/tags/cs/" rel="tag">#CS</a> <a href="/tags/spam/" rel="tag">#spam</a> <a href="/tags/aislop/" rel="tag">#AISlop</a> <a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a><br>

Edited 154d ago

The wrongness density is approaching critical today:<br><br>Headline: The military’s new AI says ‘hypothetical’ boat strike scenario ‘unambiguously illegal’<br><br><a href="https://san.com/cc/the-militarys-new-ai-says-hypothetical-boat-strike-scenario-unambiguously-illegal/" rel="nofollow" class="ellipsis" title="san.com/cc/the-militarys-new-ai-says-hypothetical-boat-strike-scenario-unambiguously-illegal/"><span class="invisible">https://</span><span class="ellipsis">san.com/cc/the-militarys-new-a</span><span class="invisible">i-says-hypothetical-boat-strike-scenario-unambiguously-illegal/</span></a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/militaryai/" rel="tag">#MilitaryAI</a> <a href="/tags/usmilitary/" rel="tag">#USMilitary</a> <a href="/tags/saberrattling/" rel="tag">#SaberRattling</a> <a href="/tags/geopolitics/" rel="tag">#geopolitics</a> <a href="/tags/uspol/" rel="tag">#USPol</a><br><br>cc <span class="h-card"><a href="https://circumstances.run/@davidgerard" class="u-url mention" rel="nofollow noopener noreferrer" target="_blank">@<span>davidgerard</span></a></span><br>

Edited 116d ago

<p>This is an excellent video. This is the message. Perhaps we need to refine it more. Find ways to communicate it more clearly. But this is the correct take on LLMs, so-called-AI and the proliferation of these tools to the general public. <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/llms/" rel="tag">#llms</a> <a href="/tags/ai/" rel="tag">#ai</a> <a href="/tags/genai/" rel="tag">#genAI</a> <a href="/tags/video/" rel="tag">#video</a> <a href="/tags/slop/" rel="tag">#slop</a> <a href="/tags/slopocalypse/" rel="tag">#slopocalypse</a> <a href="/tags/enshittification/" rel="tag">#enshittification</a> </p><p><a href="https://www.youtube.com/watch?v=4lKyNdZz3Vw" rel="nofollow" class="ellipsis" title="www.youtube.com/watch?v=4lKyNdZz3Vw"><span class="invisible">https://</span><span class="ellipsis">www.youtube.com/watch?v=4lKyNd</span><span class="invisible">Zz3Vw</span></a></p>

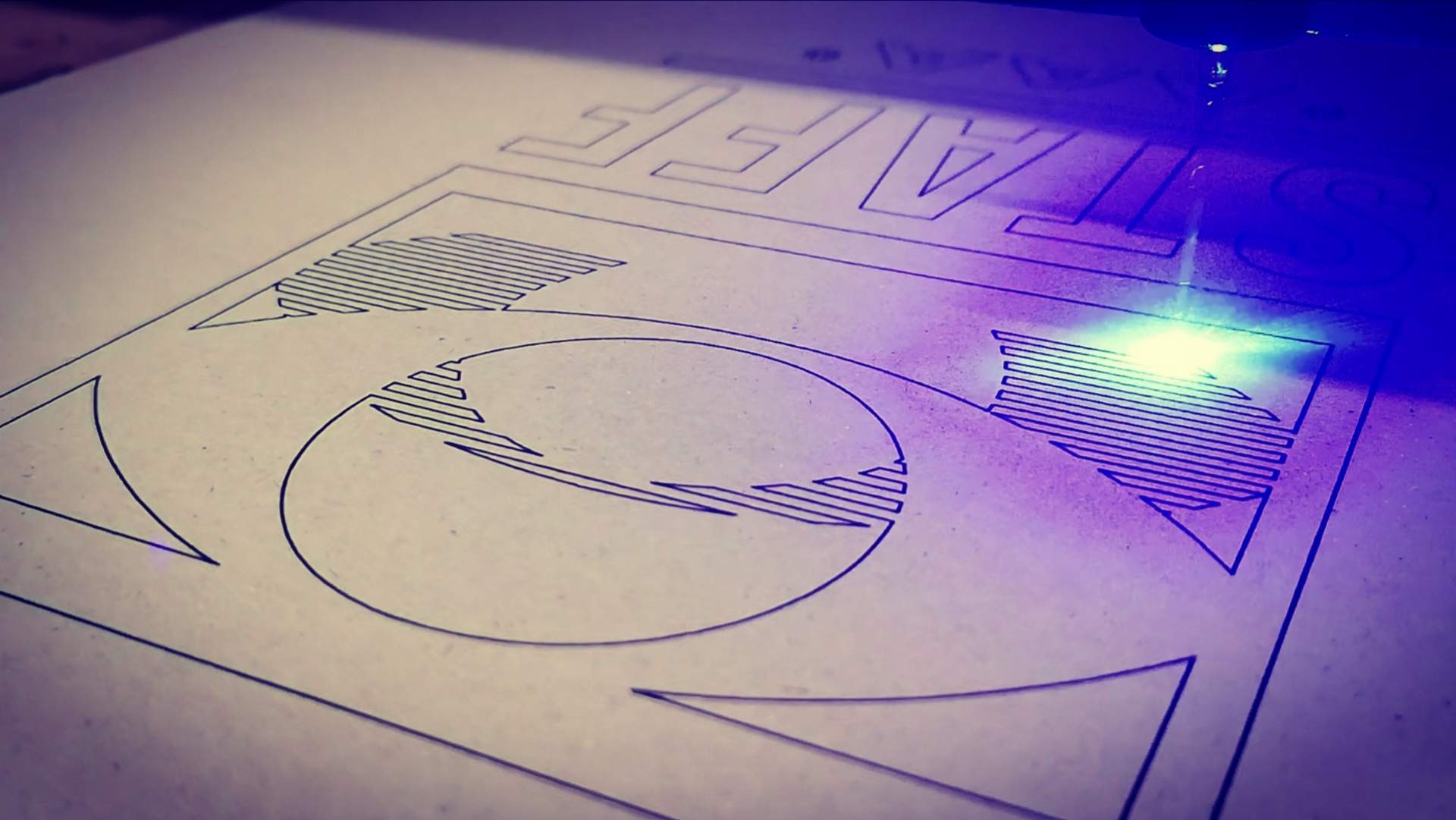

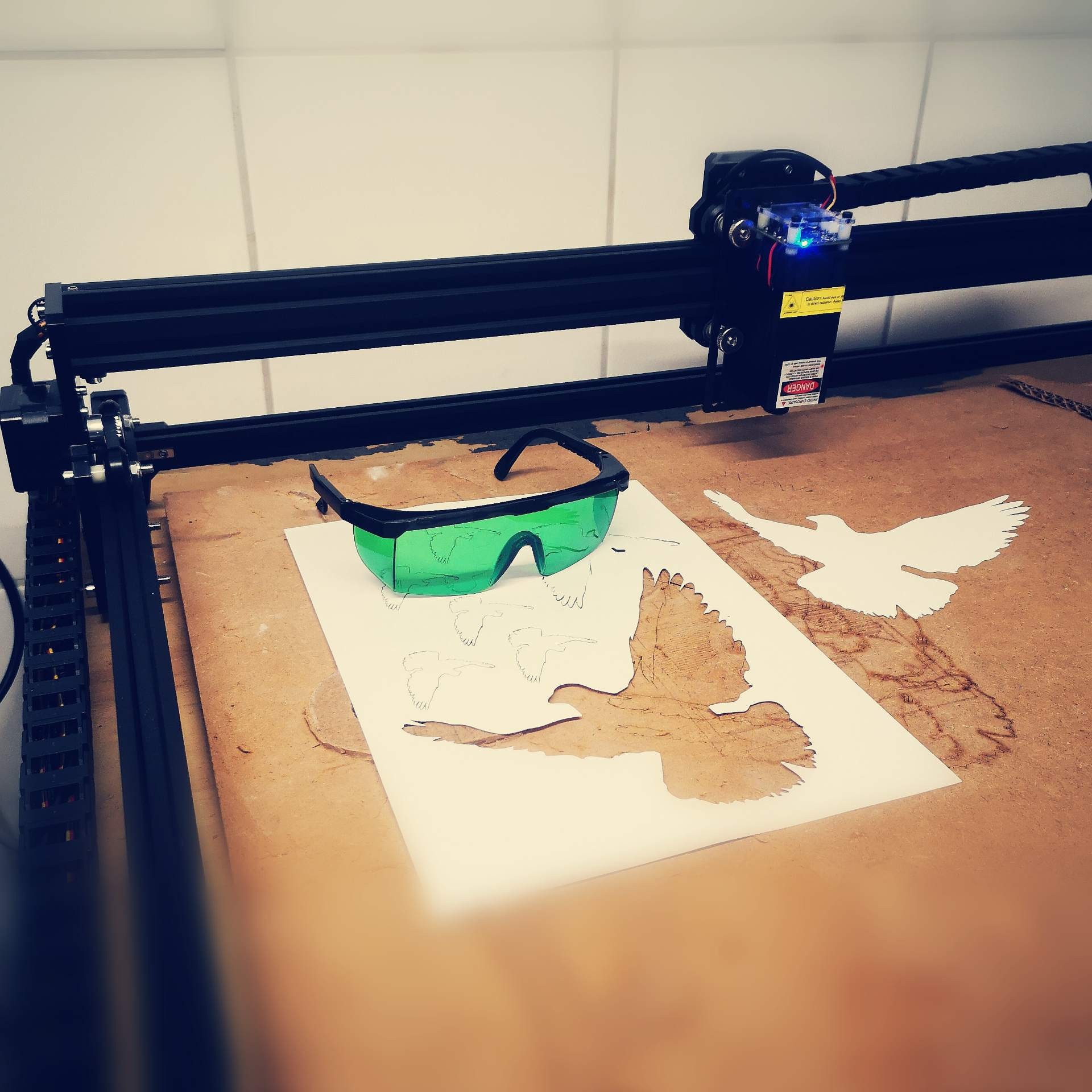

<p>Are You wondering why it's more expensive to rent an <a href="/tags/adobeillustrator/" rel="tag">#AdobeIllustrator</a> license for a year, than buying one of those extremely handy large format <a href="/tags/lasercutter/" rel="tag">#lasercutter</a> and <a href="/tags/engraving/" rel="tag">#engraving</a> machines?</p><p>Well, these <a href="/tags/cuttingedge/" rel="tag">#cuttingedge</a> devices are increasingly affordable and easy to assemble, while old <a href="/tags/ai/" rel="tag">#Ai</a> still <a href="/tags/overrated/" rel="tag">#overrated</a> and <a href="/tags/overpriced/" rel="tag">#overpriced</a> simply can't keep up generating profits for <a href="/tags/adobey/" rel="tag">#adOBEY</a> <a href="/tags/shareholders/" rel="tag">#shareholders</a>. Not without the <a href="/tags/firefly/" rel="tag">#firefly</a> branded <a href="/tags/enshitification/" rel="tag">#enshitification</a> of <a href="/tags/genai/" rel="tag">#genAi</a>. </p><p>There's no question for me, <a href="/tags/inkscape/" rel="tag">#Inkscape</a> is ahead with <a href="/tags/svg/" rel="tag">#SVG</a> when it comes to file exchange, and <a href="/tags/grbl/" rel="tag">#grbl</a> <a href="/tags/gcode/" rel="tag">#gcode</a>* <a href="/tags/extensions/" rel="tag">#extensions</a> as well as <a href="/tags/interoperability/" rel="tag">#interoperability</a> with <a href="/tags/postprocessors/" rel="tag">#postprocessors</a> like <a href="/tags/lasergrbl/" rel="tag">#LaserGRBL</a>, <a href="/tags/linuxcnc/" rel="tag">#LinuxCNC</a> or <a href="/tags/ugs/" rel="tag">#UGS</a>. Not to mention that it works with <a href="/tags/silhouette/" rel="tag">#Silhouette</a> <a href="/tags/plotters/" rel="tag">#plotters</a> directly, even without <a href="/tags/cameo/" rel="tag">#Cameo</a>'s studio software. (*Спасибо за улучшение) </p><p><a href="/tags/thinkdifferent/" rel="tag">#thinkDifferent</a> <a href="/tags/stayrebel/" rel="tag">#stayRebel</a> <br><a href="/tags/drawfreely/" rel="tag">#drawFreely</a> <a href="/tags/donate2improveit/" rel="tag">#donate2improveIT</a> <br><a href="/tags/download/" rel="tag">#download</a> here... <a href="https://inkscape.org" rel="nofollow"><span class="invisible">https://</span>inkscape.org</a></p>

Edited 140d ago

Massive compute power applied to massive data sets can produce outcomes that are worse at the task they’re (ostensibly) intended for than much simpler, easier to understand, less wasteful, and less intrusive data-light methods. It requires an extreme form of bias to believe that big compute + big data is always better.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/tech/" rel="tag">#tech</a> <a href="/tags/dev/" rel="tag">#dev</a> <a href="/tags/datascience/" rel="tag">#DataScience</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/computerscience/" rel="tag">#ComputerScience</a> <a href="/tags/ecologicalrationality/" rel="tag">#EcologicalRationality</a><br>

Edited 128d ago

<p>The present perspective outlines how epistemically baseless and ethically pernicious paradigms are recycled back into the scientific literature via machine learning (ML) and explores connections between these two dimensions of failure. We hold up the renewed emergence of physiognomic methods, facilitated by ML, as a case study in the harmful repercussions of ML-laundered junk science. A summary and analysis of several such studies is delivered, with attention to the means by which unsound research lends itself to social harms. We explore some of the many factors contributing to poor practice in applied ML. In conclusion, we offer resources for research best practices to developers and practitioners.<br></p>From The reanimation of pseudoscience in machine learning and its ethical repercussions here: <a href="https://www.cell.com/patterns/fulltext/S2666-3899(24)00160-0" rel="nofollow" class="ellipsis" title="www.cell.com/patterns/fulltext/S2666-3899(24)00160-0"><span class="invisible">https://</span><span class="ellipsis">www.cell.com/patterns/fulltext</span><span class="invisible">/S2666-3899(24)00160-0</span></a>. It's open access.<br><br>In other words ML--which includes generative AI--is smuggling long-disgraced pseudoscientific ideas back into "respectable" science, and rejuvenating the harms such ideas cause.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/machinelearning/" rel="tag">#MachineLearning</a> <a href="/tags/ml/" rel="tag">#ML</a> <a href="/tags/aiethics/" rel="tag">#AIEthics</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/pseudoscience/" rel="tag">#pseudoscience</a> <a href="/tags/junkscience/" rel="tag">#JunkScience</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/physiognomy/" rel="tag">#physiognomy</a><br>

Edited 128d ago

Mel Andrews on the connections between a naive belief in scientific objectivity (facts and data are "real" and "correct" and "neutral") and eugenics:<br><p>Francis Galton, pioneering figure of the eugenics movement, believed that good research practice should consist in “gathering as many facts as possible without any theory or general principle that might prejudice a neutral and objective view of these facts” (Jackson et al., 2005). Karl Pearson, statistician and fellow purveyor of eugenicist methods, approached research with a similar ethos: “theorizing about the material basis of heredity or the precise physiological or causal significance of observational results, Pearson argues, will do nothing but damage the progress of the science” (Pence, 2011). In collaborative work with Pearson, Weldon emphasised the superiority of data-driven methods which were capable of delivering truths about nature “without introducing any theory” (Weldon, 1895).<br></p>From The Immortal Science of ML: Machine Learning & the Theory-Free Ideal.<br><br>I've lost the reference, but I suspect it was Meredith Whittaker who's written and spoken about the big data turn at Google, where it was understood that having and collecting massive datasets allowed them to eschew model-building.<br><br>The core idea being critiqued here is that there's a kind of scientific view from nowhere: a theory-free, value-free, model-free, bias-free way of observing the world that will lead to Truth; and that it's the task of the scientist to approximate this view from nowhere as well as possible.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llms/" rel="tag">#LLMs</a> <a href="/tags/science/" rel="tag">#science</a> <a href="/tags/datascience/" rel="tag">#DataScience</a> <a href="/tags/scientificobjectivity/" rel="tag">#ScientificObjectivity</a> <a href="/tags/eugenics/" rel="tag">#eugenics</a> <a href="/tags/viewfromnowhere/" rel="tag">#ViewFromNowhere</a><br>

The rhetoric that limiting or banning AI/generative AI/LLM/diffusion model use is "ableist" or "gatekeeping" is the latest desperate attempt to find an angle through which to force this technology into our lives against our collective will. We need to reject this narrative. Common as it is it simply doesn't scan. It reads to me as an attempt to co-opt the language of social justice to shame people into accepting an unjust and largely failing technology that they are rightfully rejecting.<br><br>Think it through. If you don't accept the use of climate-destroying, electricity-and-fresh-water-sapping, job-destroying, economy-thrashing--and yet mediocre or poorly performing!--technology created by multi-trillion-dollar sociopathic entities, then you are preventing people with less privilege than you have from living their best lives. You are preventing them from learning how to code. You are preventing them from obtaining coveted jobs in the tech sector. You are preventing them from having access to information. You, personally, are responsible for all this. Not the multi-trillion-dollar sociopathic entities who've not only created this technology and forced it on us but contributed to creating the less-privileged conditions of the people you are supposedly responsible for with your individual choices. Not the governments that neglected to enforce existing laws that would have prevented such multi-trillion-dollar sociopathic entities from forming in the first place, let alone creating such a technology--while also creating the conditions that led to people being less privileged. No, they are not responsible. You are. I am.<br><br>That doesn't make any sense.<br><br>Neoliberalism's greatest trick has been to shift responsibility for any problems away from the powerful and onto individuals who are not empowered to fix anything, all while convincing everyone that this is right and proper. Large corporations do not cause a plastic pollution problem; you and I do, by not separating our recycling. Large corporations, governments and militaries do not cause CO2 pollution and climate damage; you and I do, by using incandescent lightbulbs and non-electric/non-hybrid cars or eating meat. Lack of regulation and large agribusiness practices are not to blame for poor food quality; you and I are, for buying what they sell instead of going organic and joining a CSA. Etc. ad infinitum. Large, powerful entities routinely generate a problem, then tell you and me that we are responsible for the problem as well as for fixing it. Never mind that these entities could nudge their own behavior a bit and move the needle on the problem far more than masses of people could no matter how organized they were. Never mind that these entities could be constrained from causing such problems in the first place.<br><br>We are watching a new variation of this pattern come into being right in front of our eyes with AI. We should stop accepting these fictions. You are neither ableist nor a gatekeeper for resisting AI. You are, instead, attempting to forestall the further degradation of conditions for everyone, which starts this same cycle anew.<br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/llm/" rel="tag">#LLM</a> <a href="/tags/diffusionmodels/" rel="tag">#DiffusionModels</a> <a href="/tags/neoliberalism/" rel="tag">#neoliberalism</a> <a href="/tags/depoliticization/" rel="tag">#depoliticization</a><br>

Edited 126d ago

<p>Before the automobile industry invented the catalytic converter, the costs of reducing air pollution seemed astronomical, enough to bankrupt the entire industry. After they invented the catalytical converter, the costs were manageable. And they only invented it because they were faced with the threat of being shut down.<br></p>Industries creating harms often claim that controls and regulations are impossible, would bankrupt them, etc., trying to make their existence into a zero-sum game (for some people to have the benefit of our industry, other people must suffer). AI companies claim they must steal copyrighted works because they could not exist otherwise; or be allowed to use as much electricity as they demand in spite of the costs. But it's B.S., and we should stop accepting this rhetoric. Forced to innovate to reduce harms, these industries have innovated, and made themselves even more profitable than they were when they were dragging their feet about it like children who don't want to clean their rooms.<br><br>From <a href="https://lpeproject.org/blog/is-climate-change-an-externality/" rel="nofollow">Is Climate Change An Externality</a><br><br><a href="/tags/ai/" rel="tag">#AI</a> <a href="/tags/genai/" rel="tag">#GenAI</a> <a href="/tags/generativeai/" rel="tag">#GenerativeAI</a> <a href="/tags/law/" rel="tag">#law</a> <a href="/tags/copyright/" rel="tag">#copyright</a> <a href="/tags/pollution/" rel="tag">#pollution</a> <a href="/tags/climate/" rel="tag">#climate</a> <a href="/tags/innovation/" rel="tag">#innovation</a><br>

Edited 126d ago