#YGO #ARC-V #DL

https://x.com/YuGiOh_DL_INFO/status/1846883502752780669

10/23!!!!!回來了!!!!!遊里!!!!

dl

#YGO-ZEXAL #DL

https://x.com/punsukabooks/status/2003370537360457749

謝謝梅拉古落地……梅拉古跟Ⅳ在動畫沒講過話的遺憾在DL實現了……

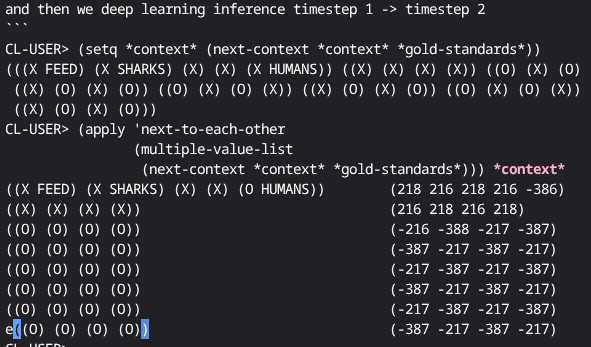

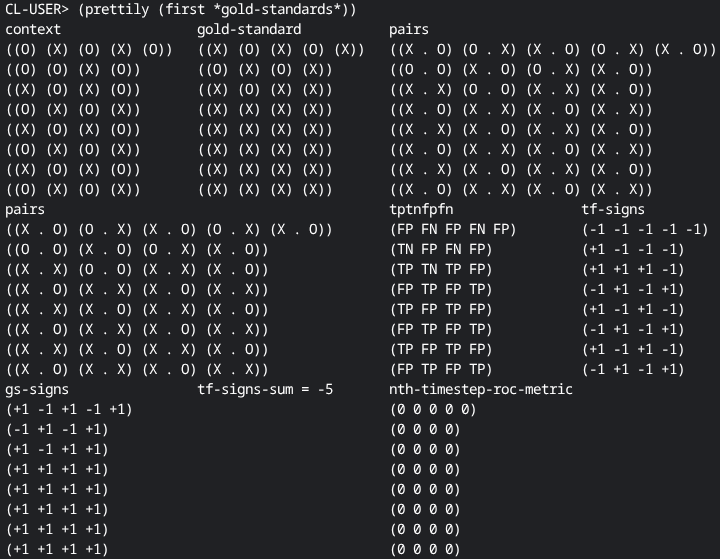

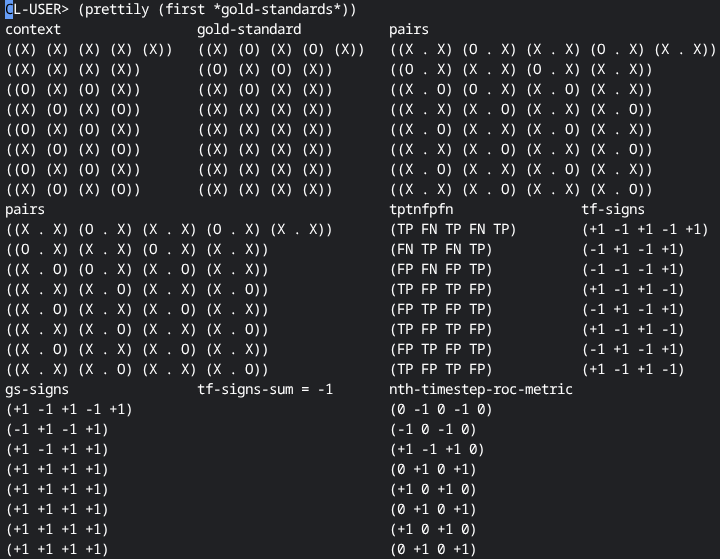

A better and #symbolic #deeplearning #algorithm in #commonLisp .

https://screwlisp.small-web.org/fundamental/a-better-deep-learning-algorithm/

I hope I come across as slightly tongue-in-cheek! Though all my points and notes are in fact genuine.

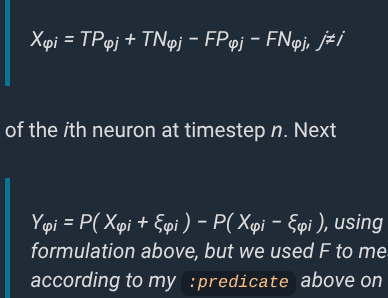

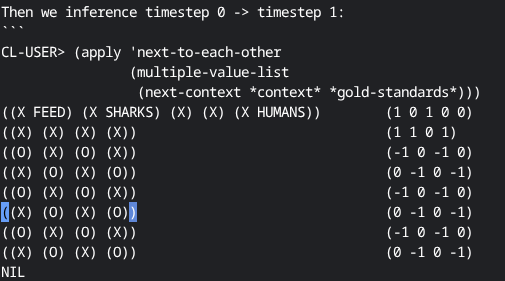

tl;dr I define completely explainable and interpretable deep learning / inferencing in terms of operations on sets of symbols.

I think it successfully underscores the wild misapprehensions about deep learning that abound in the wild. What do you think?

https://toobnix.org/w/9oA5WZEkbjKkfbsSxEoJ8v #archive

Okay I guess on the hour it's the Sunday-morning-in-Europe #lispyGopherClimate .

a. I will lightly go over my recent #commonLisp #symbolic #DL ( #ai!) algo / article.

b. Excitingly that gave me occasion to use

(this-function foo &rest keys &key &allow-other-keys)

which I used to pass data-like parameters along without cluttering up arguements (waters' functions of seven arguments). People had tried to explain it to me previously but now I underst

As someone who now has a feedforward neural network of a single hidden layer implementation (in #commonLisp) I learned a lot about what has a spot to be stuck into the algorithm.

https://screwlisp.small-web.org/fundamental/sharpsign-sharpsign-input-referential-ffnn-dl-data/

Something that turns out to be easy to stick in is making training data which copies data out of the current context or even to simply point the training data definition into the #deepLearning inference input context though I did not prove convergence for the latter case.

#lispyGopherClimate #lisp #technology #podcast #archive, #climate #haiku by @kentpitman

https://communitymedia.video/w/c3GdAXe7BQTbK3VrcXCm7E

& @ramin_hal9001

On the #climate I would like to talk about the company that found #curl and #openssl's #deeplearning many (10ish) 0-day vulns "using #ai ". (#llm s were involved).

This obviously relates to my #lisp #symbolic #DL https://screwlisp.small-web.org/conditions/symbolic-d-l/ (ffnn equiv). Thanks to everyone involved with that so far.

I implemented that using #commonLisp #condition handling viz KMP.

Yeesh, okay it is out and I can stop telling you I am going to write it.

https://screwlisp.small-web.org/complex/lisp-feedforward-deep-learning-example/

#deepLearning #symbolic #ffnn pure ansi #commonLisp

The example deliberately causes a hallucination programmed into the training data via simple #roc reasoning. To my knowledge the ROC interpretation of ffnn inference is original to me, here.

The hallucination is in both lockstep and single #neuralnet activations.

Be the first to complain my #DL works on subregions of jagged lists! #programming

#itchio #gamedev #programming #theory completely explainable game-embeddable #deepLearning system using #roc #statistics .

I coded this one simply and iteratively, since a few people worked on reimplementing my #commonLisp code to-be-simpler.

The gist is that I show that deep learning updates, and indicate training as well are a simple true-positive/true-negative/false-positive-false-negative equation of the previous time step in an eminently explorable way. #DL #AI

#lispyGopherClimate #live #technology #podcast (?!) https://toobnix.org/w/jQkCWCeFNRL9Utcr2GWurM

Chat live in #lambdaMOO as always https://lambda.moo.mud.org/

(@join screwtape

"hey

)

- I release NZ government secrets about #AI and #LLMs senior managers keep sending me

- I start /actually/ multimooing my own personal moos LambdaMOO

- Otherwise, my #commonLisp brain is entirely inside my #DL #DeepLearning #roc #statistics original formulation https://lispy-gopher-show.itch.io/dl-roc-lisp

@kentpitman featuring.

Hey everyone, on the hour it is the Sunday morning in Europe #peertube #ARCHIVE

https://toobnix.org/w/ktQA9DTKyAe9yhTdoJupxh

Do I actually even #code though?

A live #demo of two recent programs of mine.

https://lispy-gopher-show.itch.io/dl-roc-lisp

https://lispy-gopher-show.itch.io/nicclim

you can run in #commonLisp

(load "dl-roc.lisp")

.

#DL #AI #UI #madeWithLISP #programming #McCLIM

Miscellany

New ECL this week! https://ecl.common-lisp.dev/ @jackdaniel

#XMPP by Glenneth2 https://github.com/parenworks/CLabber

https://cyberhole.online/basic/ is this millenium's teletype

#lispyGopherClimate #technology #podcast #live https://toobnix.org/w/5QbQiLw7zrFiETgbv32kJk first half missing?

- One week from today is #LambdaMOO's annual festival, April Fool's.

- Let's have a text based #MUD #bonkwave pool party / concert

Notably /after/ submitting my #ELS2026 #commonLisp #deepLearning article https://european-lisp-symposium.org/2026

we just had this great thread: https://gamerplus.org/@screwlisp/116286095082069619 I will read #bookstodon and #programming suggestions by @riley and others. #ai #DL #ML